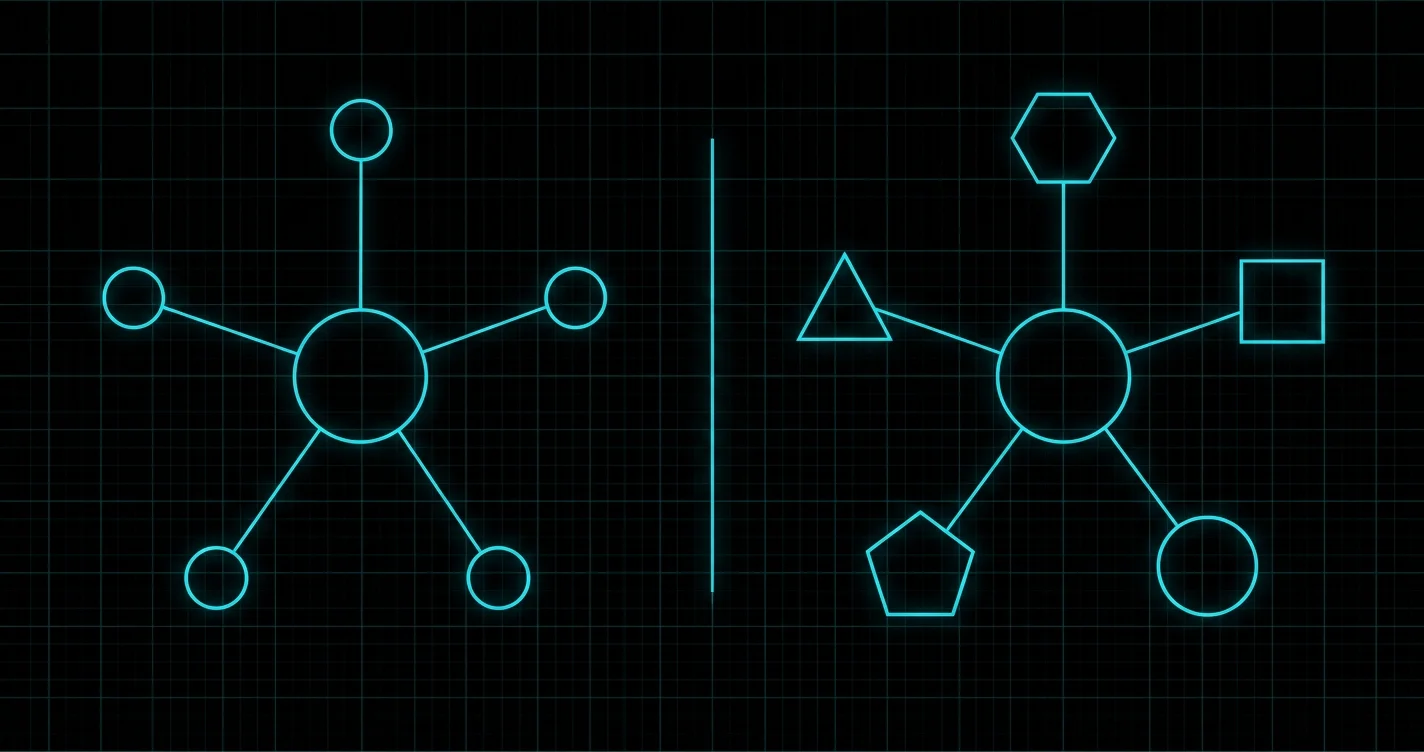

If you spend any time on AI Twitter or YouTube, you have seen the words 'multi-agent' and 'multi-model' used as if they meant the same thing. They do not. They describe two completely different architectures, built to solve different problems, and the difference matters most for the job that no AI demo ever advertises: catching the moments when your AI is confidently wrong.

This guide draws a clean line between the two. It explains what builder Nate Herk actually demos when he shows off Claude's new agent teams feature. It explains what TrueStandard does when it runs your draft through four to five different models in parallel. And it explains why one of these architectures is the right tool for production work and the other is the right tool for verification. Using one as a substitute for the other is a category error that will eventually publish a wrong answer in your name.

Why these terms get confused

Both terms include the word 'multi.' Both involve more than one AI doing work in parallel. Both produce visibly impressive demos. The casual reader sees 'I have five agents working together' or 'I have five models checking my work' and concludes they have heard the same idea twice. This conflation is not malicious. It is the predictable result of two technical concepts being marketed in the same week to the same audience. But the underlying mechanics differ in a way that determines whether you can trust the output, and they are worth understanding before you bet a piece of published content on the result.

What multi-agent actually is

A multi-agent system is several AI agents, usually all running on the same underlying model, that have been given different roles and asked to coordinate on a complex task. In Nate Herk's April 2026 demo of Claude's new agent teams feature, he spawned three agents (a front-end developer, a backend developer, and a QA agent) and asked them to build a working full-stack web app together. All three agents ran on Claude Sonnet 4.6 (here is why Sonnet is the right default for most Claude workloads). Their differentiation came from their role descriptions and the files they were allowed to edit, not from any difference in the model behind them.

How agent teams work in practice

A team lead or main session creates the team and assigns each agent a specialized role with its own files to own. The agents share a task list and can send messages directly to each other rather than going through the team lead. They work in parallel (the front-end dev does not have to wait for the backend dev to finish) and they react to each other in real time. In Nate's demo, the QA agent flagged three issues with the first build, sent the work back to the developers, and the team iterated until QA passed. The output was a polished landing page from a single short prompt.

What multi-agent is good for

- Parallel construction work where different specialists can make progress at the same time

- Tasks that benefit from real-time coordination, like front-end and back-end developers checking in with each other while building

- Workflows with clear dependencies where one role hands off to another in a defined sequence

- Production work where you can afford the extra tokens to have one agent QA the work of another

What multi-agent costs

Token usage scales linearly with the number of agents. A team of three agents uses roughly three times the tokens of a single agent on the same task, plus the coordination overhead between them. Nate explicitly recommends staying between two and five agents per team. Beyond that, the token cost rises faster than the quality improvement, and the team starts to spend more time coordinating than working. Multi-agent is a parallel-execution architecture, not a verification architecture.

What multi-model actually is

A multi-model system is several different LLMs, from different labs, each trained on different data with different objectives, each given the same input and asked to produce its own answer. In TrueStandard's case, that means sending the same content to Claude, GPT, Gemini, and one or two others in parallel, then comparing the answers and surfacing the places where they disagree. The differentiation between the models is structural. They have different training data, different model architectures, and different fine-tuning priorities. That is a deeper difference than role descriptions alone.

How multi-model verification works in practice

You paste your draft or your question into the system. The system fans the input out to four or five different models simultaneously. Each model produces its own answer based on its own training. The system compares the answers and highlights every claim where the models agree (high confidence, likely correct) and every claim where they disagree (uncertain, verify independently before publishing). The whole process takes about 60 seconds. There is no orchestration, no role assignment, no agent-to-agent messaging. Just parallel queries with the answers laid side by side.

What multi-model is good for

- Fact-checking specific claims in a draft

- Finding the moments when a single confident model is actually wrong, by comparing its answer to four others that draw on different training data

- Generating evidence trails. Five model answers to the same question are a much better record than one

- Publication-grade quality control on AI-assisted content where being wrong has a real cost

What multi-model costs

Multi-model verification costs roughly the same as a single query to each of the constituent models, run in parallel. Because the models do not coordinate or iterate, there is no overhead beyond the raw inference cost. For most published content, that works out to a few cents per draft and 60 seconds of wall time. The expensive part of verification is not the inference. It is the editor or writer time you save by knowing exactly which claims to spot-check.

The critical difference: shared blind spots vs independent perspectives

Here is the difference that determines whether either architecture can verify AI output. A multi-agent system, where every agent runs on the same underlying model, has shared blind spots. If Claude Sonnet 4.6 has been trained on data that contains a wrong fact, then every agent in the team (the developer, the QA agent, the team lead) will draw on that same training data. The QA agent is not going to flag a wrong fact that the model was trained to confidently believe. It will faithfully confirm the fact and move on. This is not a flaw in agent teams. It is the design. Agent teams are built to coordinate execution, not to introduce dissenting perspectives.

A multi-model system, where each model comes from a different lab with different training data, has independent blind spots. Where Claude might confidently confirm a wrong fact, GPT or Gemini may flag it as suspicious because their training covered the area differently. Where GPT might hallucinate a citation, Claude may correctly identify it as nonexistent. The architecture is not magic. It just gives you four or five different priors on the same question, and the moments when those priors disagree are the moments worth your attention.

This is why TrueStandard uses multi-model and not multi-agent for verification. We did not pick the architecture because it sounded better. We picked it because it is the only architecture in 2026 that can structurally catch the kind of error that single-model self-verification will always miss, no matter how many agents you wrap around it. Multi-agent is the right tool for building things. Multi-model is the right tool for checking that what you built is actually true.

A real example: when agent teams miss what multi-model catches

Imagine you ask a Claude agent team to draft a B2B blog post about AI adoption rates in financial services. The team lead spawns a research agent, a writer agent, and a fact-checker agent. The research agent finds and cites a statistic: 'According to a 2025 Gartner survey, 73 percent of mid-market financial services firms have deployed AI in production.' The writer agent incorporates the statistic into the post. The fact-checker agent reviews the draft and approves it. The team ships you the finished draft.

Here is the problem. That Gartner statistic is a hallucination. There is no such survey. The research agent generated the citation because Claude Sonnet 4.6, in this hypothetical, happens to have a confident but incorrect prior about a survey that does not exist. The fact-checker agent, also running on Claude Sonnet 4.6, has the same prior. It looks at the citation, recognizes the format, has no internal flag for the statistic being wrong, and confirms it. The writer agent never had reason to question it in the first place. Three agents, three approvals, one fabricated statistic now sitting in your published draft.

Now imagine you run the same draft through a multi-model verification step before publishing. The system sends the post to Claude, GPT, Gemini, and two other models. Claude confirms the statistic — its training matches the original agent team's training. GPT, trained on different data, flags the statistic as one it cannot find a source for. Gemini does the same. Two of the five models disagree on this specific claim. The system surfaces the disagreement to you. You spend 30 seconds checking the original Gartner site, confirm the citation does not exist, and edit the draft before publishing. Total time saved compared to manual fact-checking: about 30 minutes per article, plus the prevented embarrassment of publishing a fabricated statistic to your audience.

This is not a hypothetical edge case. Independent benchmarks put hallucination rates for frontier models in the 17 to 34 percent range on factual claims. For the categories of claims B2B writers and journalists actually care about (statistics, quotes, citations, dates, attributions) the rate is high enough that publishing AI-assisted content without multi-model verification means accepting a steady drip of errors into your work.

When to use each architecture

These are not competing tools. They are tools for different jobs. Use them together where each one fits.

Reach for multi-agent when...

- You need to coordinate parallel work like building software, drafting and reviewing in alternating passes, or processing a queue of tasks

- Different parts of the job need genuinely different specializations and the cost of role coordination is worth paying

- The bottleneck is execution speed, not accuracy verification

- You are building something (a website, a workflow, a pipeline) rather than checking something for correctness

Reach for multi-model when...

- You need to check whether AI output is actually correct

- You are about to publish AI-assisted content where the cost of being wrong is higher than the cost of verifying

- Your work depends on factual claims, statistics, quotes, attributions, or dates

- You want an evidence trail showing which claims are confirmed by multiple independent models versus which need human spot-checking

What writers, journalists, and B2B teams should actually do

Use both architectures where each one fits. Build with multi-agent. Verify with multi-model. Do not try to substitute one for the other.

Drafting and producing content

Use a single AI model directly, or a multi-agent setup if you have a workflow that benefits from parallel specialized roles (a researcher feeding a writer feeding an editor, for example). Most content work does not need multi-agent at all — single-model drafting is faster and simpler, and the quality difference is small for typical writing tasks.

Fact-checking and verification

Use multi-model verification, not multi-agent. Fact-checking exists to introduce a perspective the original draft did not contain, and a multi-agent system built on a single underlying model cannot structurally do that. TrueStandard runs your draft through four to five different models from different labs in parallel, in 60 seconds, and surfaces every claim where the models disagree.

Pre-publish quality control

Combine both. Have the production work done by whatever single-model or multi-agent setup you prefer, and add a multi-model verification step before any AI-assisted content reaches an audience. The verification step does not replace editorial judgment. It tells you exactly where to apply that judgment instead of forcing you to spot-check every sentence.

Decision framework: which architecture for which job

If you remember nothing else from this guide, remember this table.

| If your job is... | Use... | Because... |

|---|---|---|

| Building software, websites, or pipelines | Multi-agent (agent teams) | Parallel specialized execution beats sequential single-agent work |

| Drafting articles, emails, posts | Single model (Sonnet 4.6 default) | Multi-agent is overkill for most writing tasks |

| Coordinating parallel work across roles | Multi-agent (agent teams) | Direct messaging and shared task lists are the value prop |

| Fact-checking AI-generated claims | Multi-model verification (TrueStandard) | Different training data is the only structural defense against shared blind spots |

| Pre-publish QA on AI-assisted content | Multi-model verification (TrueStandard) | Single-model self-verification has known ceilings around 60-70% recall |

| Original analysis on contested topics | Multi-model verification on the result | Reasoning differences between models surface contested claims faster than any single model can |

Notice the pattern. Multi-agent owns construction. Multi-model owns verification. Trying to use one for the other is a common architecture mistake AI builders make in 2026, and it is the mistake that quietly publishes wrong answers in production. We built TrueStandard for the four rows in this table that say 'multi-model verification.' Paste your draft, four to five models from different labs check the claims in parallel, every disagreement surfaced in 60 seconds.

Frequently Asked Questions

What is the difference between multi-agent and multi-model AI?

Multi-agent means several AI agents, usually running on the same underlying model, given different roles and asked to coordinate on a complex task. Think of a frontend developer agent, a backend developer agent, and a QA agent all running on Claude Sonnet 4.6. Multi-model means several different LLMs from different labs, each trained on different data with different objectives, each given the same input and asked for their own answer. In practice, that means sending the same draft to Claude, GPT, and Gemini in parallel and comparing the answers. Multi-agent is built for parallel execution. Multi-model is built for verification through independent perspectives.

What are Claude's agent teams and what do they do?

Agent teams are an experimental Claude feature released in early 2026 that lets you spawn multiple specialized AI agents that share a task list, can message each other directly, and work in parallel on different parts of a complex job. Builder Nate Herk's most-cited demo uses three agents (front-end developer, backend developer, and QA) to build a complete web app from a single prompt. All three agents typically run on the same model (Claude Sonnet 4.6 in his demos), differentiated by their role descriptions and the files they own rather than by any difference in the model itself.

Can a multi-agent system fact-check itself?

Not reliably. If every agent in the team runs on the same underlying model (the standard configuration) then every agent shares the same training data, the same blind spots, and the same incorrect priors. A QA agent built on Claude Sonnet 4.6 will faithfully confirm a wrong fact that Claude Sonnet 4.6 was trained to believe, because the QA agent has no perspective the original agent did not also have. This is called homophily, and it is the structural reason single-model self-verification plateaus around 60 to 70 percent recall on factual errors. Multi-model verification breaks the failure mode by introducing models that were trained on different data.

When should I use multi-model verification instead of multi-agent?

Use multi-model verification any time the goal is to check whether AI output is actually correct, especially before publishing AI-assisted content. Multi-model is the only architecture in 2026 that can structurally catch errors that single-model self-verification will systematically miss, because the value comes from independent perspectives: different training data, different objectives, different blind spots. Use multi-agent for parallel construction work where the goal is execution speed and quality coordination, not error detection. The two architectures are not competitors. They solve different problems.

Can I combine multi-agent and multi-model?

Yes, and you should if your workflow involves both production and verification. Have a multi-agent team produce the work. A writing team, for instance, might spawn research, drafting, and editing agents. Then add a multi-model verification step at the end, before publishing, to catch the errors the single-model agent team will not catch on its own. The combination is much stronger than either architecture alone. TrueStandard handles the multi-model verification layer; tools like Claude's agent teams handle the multi-agent production layer.

Does multi-agent or multi-model cost more in tokens?

Multi-agent typically costs more per task than multi-model verification. A team of three agents working in parallel uses roughly three times the tokens of a single agent on the same task, plus coordination overhead between agents. Multi-model verification costs roughly the same as one query to each of the constituent models. For a four to five model setup, that is comparable to running the original query four or five times in parallel. For published content, multi-model verification typically costs a few cents per draft and saves 30 to 60 minutes of manual fact-checking.

What is the most common mistake AI builders make with these architectures?

Treating multi-agent as a replacement for multi-model verification. Builders see a QA agent in their multi-agent team, assume the QA agent is fact-checking the work, and ship the output without independent verification. The QA agent is reviewing structure, completeness, and obvious bugs. It is not catching factual hallucinations that the underlying model was trained to confidently believe. The fix is to add a multi-model verification step before publishing, not to add more agents to the team.

Do writers and content teams need multi-agent setups?

Most content workflows do not need multi-agent. A single high-quality model like Claude Sonnet 4.6 handles the vast majority of drafting, editing, and summarization work at quality you cannot tell apart from a multi-agent team. Where multi-agent is genuinely useful for content teams is in parallel research-and-writing pipelines, say a research agent and a writing agent sharing notes. Even there, the gain over single-model drafting is small for most use cases. What content teams actually need is multi-model verification, applied at the publish step, regardless of what produced the draft in the first place.

Keep reading

Why AI Made Writing Faster but Publishing Slower

Drafting got faster. Verification did not. The work didn't disappear — it moved to the step right before your name goes on it.

TrueStandard vs FactCheckTool

These tools look similar and solve opposite problems. One tells you if the media you're consuming is fake. The other tells you if the draft you're about to publish is true.

Your Newsletter Is One Bad Stat From Losing Every Sponsor

Solo operators ship AI-assisted content under deadline with no editor. The math only works if subscribers trust you. Here is what newsletter operators need to verify before send.

Why AI Citations Keep Showing Up Wrong

A 12-fold rise in fake biomedical references, four legal sanctions in 30 days, public defenders flooded with ChatGPT case theories. The same failure shape, across professions.

California's Verify Every AI Output Rule

Three states proposed or enforced 'independent verification' for AI work in 30 days. Here is what 'independent' actually requires.

Build With Multi-Agent. Verify With Multi-Model.

These are different tools for different jobs. Use multi-agent for parallel construction. Use multi-model for catching the errors that single-model agent teams structurally cannot catch. TrueStandard runs your draft through four to five different models from different labs in 60 seconds, surfacing every disagreement before your readers see it.

Start Verifying →